10 Game-Changing AWS AI Services

The first time I played around with AWS AI services was when I was studying for my AWS Solutions Architect Associate certification. I was just trying to get a basic understanding of AI in the cloud. What started as exam prep quickly turned into me realizing just how powerful these tools really are. Trust me, some of these services feel like they are straight out of a sci-fi movie. Let us dive into 10 AWS AI services that will blow your mind!

1. Amazon SageMaker – The ML Powerhouse

Ever wanted to build a machine learning model but did not want to deal with all the messy setup? SageMaker is your best friend. It manages heavy lifting, training, tuning, and deploying ML models, so you can focus on making your AI do cool stuff. If you have ever struggled to set up Jupyter notebooks manually, this is like having an AI personal assistant.

2. Amazon Rekognition – The Eyes of AI

Rekognition is like giving your applications super-vision. It can analyze images and videos, detect faces, read text, and even recognize emotions. Want to build a security system that detects intruders? Rekognition’s got you. Ever wonder how those auto-tagging features in photos work? This is it.

3. Amazon Comprehend – The Text Analyzer

If you have a bunch of text and need to pull out key insights, Comprehend is your go-to. It detects sentiment (is that review good or bad?), extracts key phrases, and even identifies people and places. It is like having an AI-powered detective for text analysis.

4. Amazon Polly – Your AI Voiceover Artist

Polly turns text into speech, and not in that robotic, old-school way. It has natural-sounding voices, different accents, and multiple languages. Want to create an audiobook, a voice assistant, or a talking fridge? Polly makes it easy.

5. Amazon Lex – Build A Chatbot

If you have ever chatted with Alexa, you have already used Lex. It lets you create chatbots and voice assistants with conversational AI. Whether you want to build a customer service bot or a virtual assistant that reminds you to drink water, Lex has your back.

Congrats! You’ve officially made it to the halfway point of this blog post. If this were a marathon, you’d be at the water station grabbing a Gatorade. But since this is AWS AI, consider this your quick recharge before we dive into even more mind-blowing services!

6. Amazon Textract – OCR on Steroids

Scanning documents is one thing, but extracting structured data from them? That is next level. Textract can pull tables, forms, and key information from documents, making it perfect for automating paperwork-heavy tasks. Think of it as an AI intern that never sleeps.

7. Amazon Transcribe – AI That Listens

Have a ton of audio files but do not want to sit and transcribe them? Amazon Transcribe turns speech into text, whether it is from meetings, podcasts, or customer service calls. It even recognizes different speakers. This is like having an AI note-taker that never misses a word.

8. AWS CodeWhisperer – AI-Powered Coding Assistant

If you have used GitHub Copilot or ChatGPT for coding, CodeWhisperer is AWS’s answer to that. It is an AI coding assistant that suggests lines of code as you type, helping you write code faster and with fewer errors. Whether you are working on Python, Java, or JavaScript, it is like having an AI-powered pair programmer right in your IDE.

9. Amazon Bedrock – AWS’s Generative AI Platform

If you want to build your own ChatGPT-like applications but with enterprise-level control, Amazon Bedrock is the way to go. It gives you access to foundation models from AI providers like Anthropic and Stability AI, allowing you to build chatbots, generate images, summarize text, and more all without managing the underlying infrastructure. Think of it as AWS’s generative AI playground.

10. Amazon Kendra – AI-Powered Search Engine

Tired of searching for documents manually? Kendra is an AI-powered search service that understands what you are looking for. It is perfect for businesses that need to organize tons of data, making search smarter and more intuitive.

Final Thoughts

After experimenting with these AWS AI tools, I realized something: AI isn’t just for big tech companies or hardcore data scientists. Whether you’re securing cloud environments, automating boring tasks, or just trying to build a chatbot for fun, there’s an AWS AI service that can help. Which one are you excited to try? Let me know!

Cloud Security Architects: Protecting the Cloud

In today’s world, it’s hard to imagine any business not relying on technology. Whether it's a small company storing customer data online or a large enterprise hosting its entire operations in the cloud, the need to keep everything safe is more important than ever. This is where a Cloud Security Architect comes in.

But what exactly does this person do, and why is their role so important?

The Basics of Cloud Security

Let’s start by understanding the cloud. When people talk about "the cloud," they're referring to internet-based services and storage. Instead of keeping data on your own computer or company’s servers, the cloud allows you to store it in huge data centers, managed by providers like Amazon Web Services (AWS), Google Cloud, or Microsoft Azure. It’s like renting space in a super-secure digital storage facility.

Now, here’s the catch: just like with a physical building, the cloud has doors and windows (so to speak), and you need someone to make sure only the right people can enter, and that intruders are kept out. That’s where a Cloud Security Architect comes in. They design the locks, alarms, and walls to keep everything secure. Just how an architect would design a building.

When I was first introduced to cloud security architecture I fell in love with creating architecture diagrams and it make it so much easier to create solutions in the cloud.

What Does a Cloud Security Architect Do?

A Cloud Security Architect is like a digital security expert who specializes in protecting the cloud. They create systems that make sure the cloud is safe from hackers, cyberattacks, and any other risks. They work behind the scenes to make sure your sensitive information doesn’t fall into the wrong hands.

Here’s a simpler way to think about it: imagine your house. A Cloud Security Architect is the person who designs the locks on your doors, the alarm system, and even the cameras you might use to make sure everything stays safe. But instead of doing this for a house, they do it for a company’s digital home the cloud.

I want to share a moment with everyone reading where I worked on a personal project where securing the cloud environment was essential. We all know how important KMS keys are. KMS are keys that can be used for encryption. I created a solution that would send an email alert when a new KMS key was created. By doing this I can be more aware if a random KMS key is created.

Why Cloud Security is Important

You might wonder, “Why is security in the cloud such a big deal?”

Here’s why: nearly everything we do is connected to the internet. Companies store private customer information, financial records, medical data, and much more online. If this data isn’t protected, it can be stolen or tampered with. Imagine if your personal bank information or health records were exposed because of poor cloud security!

Key Responsibilities of a Cloud Security Architect

Let’s break down some of the main tasks a Cloud Security Architect handles, but in simpler terms:

Building Security Plans: Think of this as designing the blueprint for a super-secure building. A Cloud Security Architect creates a plan for how to protect all the data and systems stored in the cloud.

Identifying Weak Spots: Just like checking if your windows are locked before leaving the house, a Cloud Security Architect looks for potential risks in the system. They figure out where a hacker might get in and then make sure it doesn’t happen.

Implementing Solutions: This means setting up all the security measures like firewalls (which block unauthorized access), encryption (which scrambles data so only the right people can read it), and monitoring tools that check for suspicious activity.

Monitoring for Threats: After setting up security, the Cloud Security Architect doesn’t just walk away. They continuously monitor the cloud environment, like a security guard watching surveillance cameras, to ensure nothing suspicious happens.

Responding to Incidents: If something does go wrong — say a hacker tries to get in — the Cloud Security Architect is the one who steps in to fix it. They have a plan in place to stop attacks and minimize the damage.

The Skills You Need to Be a Cloud Security Architect

You don’t need to be a wizard with computers to start learning about cloud security, but there are some essential skills and knowledge areas for this role. Some of the key skills include:

Understanding Cloud Platforms: Like knowing how to use different types of phones (iPhone, Android), a Cloud Security Architect needs to understand different cloud platforms like AWS, Azure, or Google Cloud.

Cybersecurity Basics: They also need to know how to protect data from getting stolen, how to block unauthorized users, and how to detect if something isn’t right.

Problem-Solving: This role requires thinking on your feet and coming up with solutions when things don’t go according to plan.

The Value of Cloud Security

At the end of the day, the job of a Cloud Security Architect is all about keeping the cloud safe. Every day, businesses and individuals depend on the cloud for everything from storing personal photos to running million-dollar operations. Without proper security, none of that would be possible.

Glossary of Tech Terms

Cloud: Refers to internet-based services and storage. Instead of storing data on your own devices, the cloud allows you to store it in remote data centers managed by providers like AWS, Google Cloud, or Microsoft Azure.

Amazon Web Services (AWS): A cloud services platform offering computing power, storage, and other functionalities to help businesses and individuals scale and grow.

Google Cloud: A cloud computing service provided by Google, offering infrastructure and data management tools.

Microsoft Azure: Microsoft’s cloud platform offers a variety of services including computing, storage, and networking for businesses.

Firewall: A security system that monitors and controls incoming and outgoing network traffic based on predetermined security rules. It acts like a barrier between a trusted internal network and untrusted external networks.

Encryption: A method of converting information or data into a code to prevent unauthorized access. Encryption ensures that only authorized users can read the data.

KMS (Key Management Service): A service (often offered by cloud providers like AWS) used to create and manage encryption keys that secure your data. It helps ensure that sensitive information is protected by controlling who can access and manage encryption keys.

Cyberattack: An attempt by hackers to damage or gain unauthorized access to computer systems, networks, or data.

Hacker: A person who uses technical expertise to gain unauthorized access to systems or data. Hackers can be malicious (black-hat) or ethical (white-hat).

Lessons Learned from AWS Solutions Architect Associate Certification

After three months of intense prep for the AWS Solutions Architect Associate (SAA) certification, I was feeling pretty confident until the night before the exam. My brain decided it was party time, and I couldn’t sleep to save my life! I tossed and turned and ended up with a grand total of three hours of sleep. Let’s just say, I rolled into exam day fueled by adrenaline and coffee, ready for anything!

As someone who's recently passed the AWS Solutions Architect Associate exam, I wanted to share some key AWS services and architectural principles that were essential in my journey. Here's a look at some services and frameworks that stood out:

1. Network Load Balancer (NLB) vs. Application Load Balancer (ALB)

AWS offers two types of load balancers, each optimized for different needs:

NLB operates at Layer 4 (Transport Layer), making it ideal for scenarios where low-latency and high throughput are critical, such as gaming or streaming. It’s designed to handle millions of requests per second.

ALB operates at Layer 7 (Application Layer) and is perfect for web applications. It can route traffic based on URL, host, or HTTP header, which is useful for microservices architectures or scenarios where content-based routing is necessary.

Both have their place in modern architectures, and understanding their differences is key to making the right choice for your application’s performance needs.

2. AWS Fargate

AWS Fargate is a serverless compute engine that works with both Amazon ECS and EKS. It allows you to run containers without having to manage the underlying infrastructure. Fargate abstracts the servers, so you can focus entirely on building and running your containerized applications.

Key benefits:

No need to provision or scale servers.

Pay only for the resources your containers use.

Enhanced security isolation.

This service simplifies container orchestration, especially for those looking to deploy microservices without managing clusters.

3. Amazon Rekognition

Amazon Rekognition is a powerful image and video analysis service that can identify objects, people, text, and even activities in real-time. Whether it’s for facial recognition, sentiment analysis, or detecting inappropriate content, Rekognition provides machine learning-based analysis without requiring any specialized AI knowledge.

Use cases:

Facial authentication for security.

Identifying celebrities in media content.

Automating image or video tagging.

4. AWS Glue

AWS Glue is a fully managed ETL (Extract, Transform, Load) service that makes it easy to prepare and transform data for analytics. It automatically discovers your data sources, transforms the data, and loads it into your data lake or data warehouse, which can then be queried using tools like Athena or Redshift.

Why it's important:

Great for building a data pipeline with minimal operational overhead.

Built-in integration with Amazon S3, Redshift, and other AWS services.

Serverless, so you only pay for what you use.

5. AWS Elastic Beanstalk

Elastic Beanstalk simplifies the deployment and scaling of web applications and services. You simply upload your code, and it automatically handles the deployment, from capacity provisioning to load balancing and monitoring.

What makes it unique:

Supports a variety of platforms, including Java, .NET, Node.js, Python, Ruby, and more.

Easy to use with auto-scaling and load balancing built-in.

You retain full control of the underlying resources.

Elastic Beanstalk is ideal for developers who want to focus on their application without worrying about the infrastructure.

6. AWS Architecture

Understanding AWS architecture involves piecing together various services to create a scalable, resilient, and secure cloud solution. Common architectural components include:

VPCs for network isolation.

Auto Scaling Groups for scaling applications automatically based on demand.

S3 and RDS for scalable storage and databases.

IAM roles and policies to secure access to resources.

When designing architecture, it's crucial to think about availability zones (AZs), multi-region deployments, and fault tolerance to ensure your applications are resilient to failure.

The Well-Architected Framework provides a set of best practices and guidance for building secure, high-performing, resilient, and efficient infrastructure on AWS. It focuses on five key pillars:

Operational Excellence: Ensure processes are automated, reliable, and manageable.

Security: Protect data and systems through encryption, IAM, and monitoring.

Reliability: Design for high availability, fault tolerance, and disaster recovery.

Performance Efficiency: Make sure your resources are being used efficiently.

Cost Optimization: Right-size resources and take advantage of cost-effective services.

Applying these principles ensures that your AWS architecture is not only optimized but also future-proof and scalable.

These services and concepts were integral to my learning and preparation for the AWS Solutions Architect Associate exam. Each one plays a critical role in designing and deploying secure, scalable, and high-performing applications on AWS. Whether you're starting your AWS journey or looking to dive deeper, understanding these tools and best practices is key to leveraging the full power of the AWS cloud.

Resources used to pass this certification:

10 Essential Social Media Cybersecurity Tips

In today's world, social media has become part of our daily lives. From sharing updates with friends to engaging with brands, social media platforms offer plenty of opportunities for connection and communication. However, with this increased connectivity comes cybersecurity risks. In this blog post, we'll explore my top 10 cybersecurity tips for social media.

1. Strong Passwords: One of the simplest yet most effective ways to enhance social media cybersecurity is by using strong, unique passwords for each platform. Avoid using easily guessable passwords and consider using a password manager to store and manage your login credentials securely.

A strong password typically combines uppercase and lowercase letters, numbers, and special characters. Here's an example of a strong password:

Example: R3d@pple$2023!

2. Two-Factor Authentication (2FA): Enable two-factor authentication wherever possible to add an extra layer of security to your social media accounts. 2FA requires you to provide a second form of verification, such as a code sent to your phone, in addition to your password, making it much harder for unauthorized users to access your accounts.

Here's an example of how 2FA works:

Password: The user enters their username and password as usual to log in to their account.

Verification Code: After entering the correct password, the user is prompted to enter a verification code. This code is typically sent to a trusted device, such as a smartphone, through a text message, authenticator app, or email.

Popular authenticator apps used for generating 2FA codes include Google Authenticator, Authy, and Microsoft Authenticator.

3. Privacy Settings: Familiarize yourself with the privacy settings offered by each social media platform and customize them according to your preferences.

Profile Visibility: Choose who can view your profile information, including your profile picture, cover photo, bio, and other details.

Post Visibility: Determine who can see the posts you share on your timeline.

4. Beware of Phishing Attacks: Be vigilant against phishing attacks, where cybercriminals attempt to trick you into revealing sensitive information or login credentials through fraudulent emails, messages, or websites.

Fake Social Media Message: You receive a direct message on your social media account from someone posing as a friend, acquaintance, or trusted entity. The message may claim that they've shared an important document, photo, or video with you and provide a link to access it.

Urgent Request: The message contains urgent language, such as "Check this out!" or "You need to see this ASAP!" to grab your attention and prompt you to click on the provided link.

Link to a Fake Website: The link in the message directs you to a website that looks like a legitimate login page for the social media platform or another trusted site. However, it's a phishing website designed to steal your login credentials.

Entering Credentials: Unaware that the website is fake, you enter your username and password as prompted to access the supposed document or content.

5. Update Regularly: Keep your social media apps and devices up to date with the latest security updates.

Verifying App Permissions: As part of the update process, you review the permissions requested by each app to ensure they are justified and necessary. You revoke any unnecessary permissions that could pose security risks, such as access to sensitive device features or personal information.

Downloading and Installing: The app store begins downloading the updated files for each selected app and installs them automatically once the download is complete. Throughout the process, you remain vigilant for any signs of suspicious activity or unusual behavior.

Verification and Authentication: Depending on your device settings, you may need to enter your password or use biometric authentication to confirm the update. This additional layer of verification helps ensure that only authorized users can initiate software updates on your device.

Confirmation and Vigilance: Once the updates are successfully installed, you receive a notification confirming that the process is complete. You take a moment to verify that the updates have been applied correctly and that the apps are functioning as expected.

6. Avoid Oversharing: Be mindful of the information you share on social media, as cybercriminals can use seemingly innocuous details to piece together your identity or launch targeted attacks. Avoid sharing sensitive personal information such as your address, phone number, or financial details.

Protect Your Location: Avoid posting real-time updates about your location, especially if you're away from home.

Be Wary of Requests for Information: Be cautious about responding to requests for personal information or engaging with suspicious accounts or messages.

Review Your Friends List: Regularly review your friends or followers list and remove any individuals you don't know or trust.

7. Monitor Account Activity: Regularly monitor your social media account activity for any suspicious or unauthorized login attempts, posts, or messages.

Regularly Check Notifications: Make it a habit to check your social media notifications regularly. Notifications alert you to new activity on your account, such as likes, comments, mentions, friend requests, or messages.

Set Up Alerts: Enable alerts or notifications for suspicious activity on your account, such as login attempts from unrecognized devices or changes to your account settings.

Monitor Direct Messages: Regularly check your direct messages or inbox for any messages from unknown or suspicious accounts.

Use Activity Logs: Use these tools to track your activity, identify any suspicious behavior, and maintain transparency in your online actions.

Report Suspicious Activity: If you encounter any suspicious or concerning activity on your social media accounts, such as unauthorized access, harassment, or phishing attempts, report it to the platform's support team immediately.

8. Secure Your Devices: Ensure that the devices you use to access social media platforms, such as smartphones, tablets, and computers, are protected and up-to-date.

Here are some examples of how to secure your devices:

Enable Biometric Authentication: Take advantage of biometric authentication methods, such as fingerprint or facial recognition, to add an extra layer of security to your devices.

Install Antivirus Software: Install reputable antivirus and antimalware software on your devices to detect and remove malicious software, such as viruses, spyware, and ransomware. Keep your antivirus software updated and perform regular scans to identify and eliminate threats.

Secure Your Network: Use a strong and unique password to secure your Wi-Fi network. Consider using a virtual private network (VPN).

Backup Your Data: In the event of a hardware failure, loss, or cyberattack, having backup copies of your important files ensures that you can quickly recover and restore your data.

Practice Safe Browsing Habits: Avoid visiting untrustworthy websites and be wary of phishing attempts and other online scams designed to trick you into revealing sensitive information or installing malware on your devices.

9. Be Wary of Third-Party Apps: Exercise caution when granting permissions to third-party apps that request access to your social media accounts.

Here's an example of how to approach third-party apps cautiously:

Evaluate Permissions: Before granting access to a third-party app, carefully review the permissions it requests. For example, if a photo editing app asks for permission to access your contacts or post on your behalf, consider whether these permissions are necessary for its functionality.

Check App Store Ratings: Review the ratings and reviews of the app in the app store before downloading it.

Read the Privacy Policy: Carefully read the app's privacy policy to understand how your data will be collected, used, and shared.

Use Official App Stores: Download apps only from official app stores, such as the Apple App Store or Google Play Store.

Review Connected Apps: Periodically review the list of connected apps linked to your social media accounts and revoke access to any apps.

10. Educate Yourself: Stay informed about the latest cybersecurity threats and best practices.

Here's an example of how to approach self-education in this area:

Stay Informed: Follow reputable cybersecurity blogs, news outlets, and social media accounts that regularly share insights and updates on cybersecurity topics.

Take Online Courses: Enroll in online courses or training programs focused on social media cybersecurity.

Read Books and Guides: Look for resources that provide practical advice, real-world examples, and actionable tips for enhancing your social media cybersecurity knowledge.

Attend Webinars and Workshops: Participate in webinars, workshops, and seminars hosted by cybersecurity professionals and organizations.

Join Online Communities: Join online communities and forums dedicated to cybersecurity and social media safety.

Follow Industry Experts: Follow respected cybersecurity experts, researchers, and thought leaders on social media platforms.

Share Knowledge with Others: Share your cybersecurity knowledge and experiences with friends, family members, and colleagues to raise awareness and promote good cybersecurity practices in your social circles. Encourage others to educate themselves and take proactive steps to protect their online privacy and security.

By following these 10 social media cybersecurity tips, you can help safeguard your personal information, privacy, and online safety in an increasingly digital world. Stay vigilant, stay informed, and stay safe online!

Identity Theft 101: Protecting Your Digital Identity

Introduction

In today's increasingly digital world, the risk of identity theft has become a prevalent concern. Identity theft can have serious consequences, ranging from financial loss to damage to your personal and professional reputation. This blog post aims to provide a comprehensive overview of identity theft, its various forms, and practical steps to safeguard your digital identity.

Data Breaches

Data breaches are incidents in which cybercriminals gain unauthorized access to databases, exposing sensitive information such as personal records, email addresses, and passwords. Hackers often sell this data on the dark web, making it available to the highest bidder.

Unsecure Browsing

Browsing the internet on unsecured or public Wi-Fi networks can expose you to various online threats. Attackers can intercept your data, including login credentials and personal information, through unencrypted connections.

The Dark Web

The dark web is a hidden part of the internet where illegal activities often take place, including the buying and selling of stolen data. It's essential to be aware of the risks associated with the dark web and avoid engaging in it.

Malware

Malicious software, or malware, includes viruses, spyware, and ransomware that can compromise your computer or mobile device. Cybercriminals use malware to steal your data, access your accounts, or demand a ransom to restore your files.

Credit Card Theft

Credit card theft occurs when cybercriminals steal your credit card information and use it for unauthorized transactions. It's vital to monitor your credit card statements for suspicious activity regularly.

Phishing

Phishing is a deceptive tactic used by cybercriminals to trick individuals into revealing personal information, such as login credentials and credit card details, by posing as trustworthy entities. These attacks often come in the form of email scams or fraudulent websites.

WiFi Hacking

Hackers can infiltrate insecure Wi-Fi networks to intercept data traffic, gaining access to personal and financial information. Always connect to secure networks when possible and use a Virtual Private Network (VPN) for added protection.

Card Skimming

Card skimming involves the installation of devices on ATMs, gas pumps, or point-of-sale terminals to capture card information. Be cautious when using card readers and check for any unusual attachments or tampering.

How to Protect Yourself from Identity Theft

Use Strong, Unique Passwords: Create complex passwords for your online accounts and consider using a password manager to keep track of them.

Enable Two-Factor Authentication (2FA): Implement 2FA wherever possible to add an extra layer of security to your accounts.

Regularly Monitor Financial Statements: Keep a close eye on your bank and credit card statements for any unusual or unauthorized transactions.

Stay Informed: Keep yourself updated on current cyber threats and common phishing tactics.

Install Antivirus and Anti-Malware Software: Protect your devices with reputable security software to detect and prevent malware.

Avoid Suspicious Emails and Links: Be cautious when opening email attachments or clicking on links from unknown sources.

Secure Your Wi-Fi Network: Use strong passwords and encryption for your home Wi-Fi network to prevent unauthorized access.

Limit Personal Information Sharing: Be cautious about sharing personal information on social media or with unverified websites.

Regularly Check Your Credit Report: Request free credit reports annually to monitor for any unusual activity.

Consider Identity Theft Protection Services: These services can provide an added layer of security and assistance in case of an identity theft incident.

Conclusion

Identity theft is a pervasive and constantly evolving threat in our digital age. By understanding the various forms of identity theft and implementing proactive security measures, you can significantly reduce your risk. Protecting your digital identity requires vigilance and a commitment to staying informed about the latest security practices. With the right precautions, you can navigate the digital landscape with confidence and peace of mind.

DevSecOps: Strengthening Software Security

Introduction

DevSecOps, a combination of Development, Security, and Operations, is an approach that integrates security into the entire software development and deployment process. It's a philosophy that emphasizes security from the beginning and throughout the DevOps lifecycle. In this blog post, we'll focus on three key aspects of DevSecOps that are essential for building healthy and secure software: operating systems hardening, application security testing in the pipeline, and the deployment of security components.

Operating Systems Hardening

Hardening the operating system is one of the foundational principles of DevSecOps. Systems hardening is a collection of tools and techniques to reduce vulnerability in applications, systems, infrastructure, firmware, and other areas. This process involves securing the underlying infrastructure where your applications run. By ensuring the operating system is configured and maintained securely, you create a more resilient environment for your software. Here are some key steps in OS hardening:

Patch Management: Regularly update the operating system with security patches and updates to protect against known vulnerabilities.

Minimize Attack Surface: Disable unnecessary services and features to reduce the potential attack surface. Only enable what is essential for the application to function.

Strong Authentication: Implement strong authentication and authorization mechanisms to control access to the system.

Logging and Monitoring: Set up comprehensive logging and monitoring to detect and respond to security incidents promptly.

File System and Permissions: Configure file system permissions and access controls to restrict unauthorized access to critical system files.

Perform Application Security Testing in the Pipeline

DevSecOps advocates for the incorporation of security testing into the continuous integration/continuous deployment (CI/CD) pipeline. A CI/CD pipeline is a series of automated processes that help deliver new software versions. This shift-left approach means that security checks are performed as an integral part of the development process, rather than being tacked on at the end. Here's how you can achieve this:

What is security testing QA?

Static Application Security Testing (SAST): Use SAST tools to scan your source code for security vulnerabilities. These tools analyze the codebase without executing it, identifying potential issues early in the development process.

Dynamic Application Security Testing (DAST): Conduct DAST during the application's runtime to identify vulnerabilities that may not be apparent in the source code. DAST tools simulate attacks and test the application's runtime behavior.

Interactive Application Security Testing (IAST): IAST tools combine elements of both SAST and DAST, providing real-time feedback on potential vulnerabilities in the running application.

Dependency Scanning: Regularly check for known vulnerabilities in third-party libraries and dependencies your application relies on.

Deployment of Security Components

Integrating security components into the DevOps pipeline is a critical step in DevSecOps. These components are designed to detect, prevent, and respond to security threats. Here are some essential security components to consider:

Web Application Firewalls (WAF): Implement a WAF to protect your web applications from common attacks, such as SQL injection and cross-site scripting.

Intrusion Detection and Prevention Systems (IDPS): Deploy IDPS to detect and block suspicious activities and potential threats in real time.

Security Information and Event Management (SIEM): Use SIEM tools to centralize and analyze security-related data from various sources, helping you detect and respond to security incidents.

Vulnerability Scanners: Continuously scan your infrastructure and applications for vulnerabilities, ensuring you can address them promptly.

Security Orchestration and Automation: Implement automation to streamline incident response and security tasks, enabling rapid and consistent actions in the face of security incidents.

Conclusion

DevSecOps is not just a buzzword; it's a fundamental shift in how organizations approach software development and deployment. By prioritizing operating system hardening, integrating security testing into the CI/CD pipeline, and deploying essential security components, you can build and maintain software that is not only functional but also resilient against evolving security threats. Embracing DevSecOps principles is an investment in the long-term security and success of your applications and systems.

To learn more about devsecops check out these resources below:

Cloud Security 101: Essentials for a Secure Cloud Environment

Introduction

As businesses continue to accept cloud computing, the need for strong cloud security practices has become vital. Cloud security is not just an IT concern; it's a shared responsibility between cloud service providers and the organizations that utilize their services.

In this blog post, we'll dig into the vital elements of cloud security, surrounding security governance, security assurance, IAM (Identity and Access Management), threat detection, vulnerability management, data protection, application security, and incident response. Let's explore the essential principles of cloud security that every organization should consider.

1. Security Governance

POC Security Contacts

Establish Points of Contact (POC) for security within your organization. These individuals will play a critical role in managing and overseeing cloud security operations. They should be well-versed in cloud security best practices and act as a bridge between your organization and cloud service providers.

Know What Region You Want to Work In

Selecting the right cloud region is crucial. Different regions may have varying data sovereignty and compliance requirements. Make sure to choose a region that aligns with your specific needs and regulatory obligations.

2. Security Assurance

Create Reports for Compliance

To maintain regulatory compliance and demonstrate a commitment to security, create reports that detail your adherence to security policies and standards. This can be particularly important for industries with strict compliance requirements.

3. IAM (Identity and Access Management)

Least Privilege

Obey the principle of least privilege by ensuring that individuals and applicants have access only to the resources and data necessary for their roles. Minimizing excessive permissions reduces the risk of data breaches. The principle of least privilege is a security concept in which a user is given the minimum levels of access or permissions needed to perform their job.

Avoid Using the Root User

The root user account should be reserved for emergency situations. Regular users and processes should not rely on this account for everyday tasks, as it can pose a significant security risk. A root user has unlimited permissions.

Use MFA (Multi-Factor Authentication)

Implement Multi-Factor Authentication (MFA) to add an extra layer of security to user logins. MFA helps safeguard against unauthorized access by requiring multiple forms of verification. Multi-factor authentication (MFA) is a multi-step account login process that requires users to enter more information than just a password.

4. Threat Detection

Splunk

Leverage tools like Splunk for real-time threat detection and analysis. These platforms help identify and respond to suspicious activities and security incidents. Splunk can simplify analyzing a lot of data.

5. Vulnerability Management

Nessus

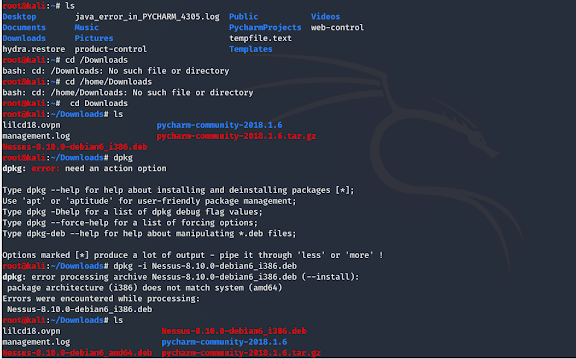

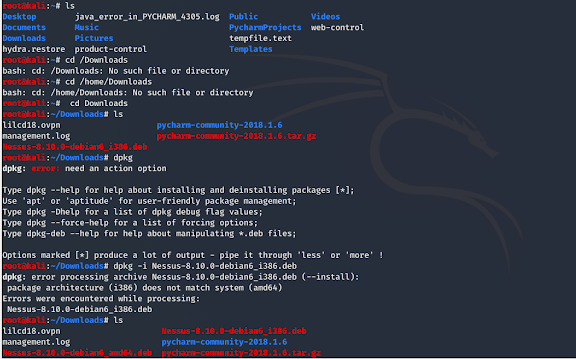

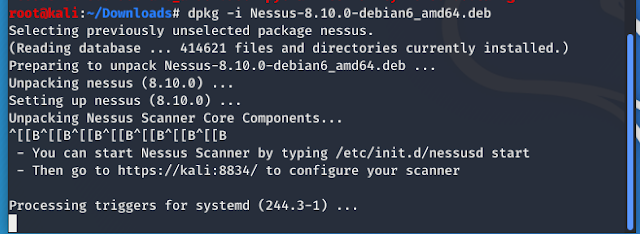

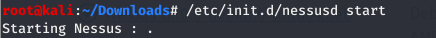

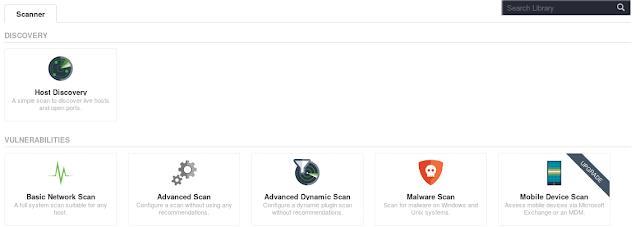

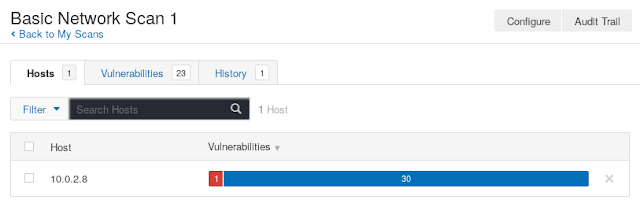

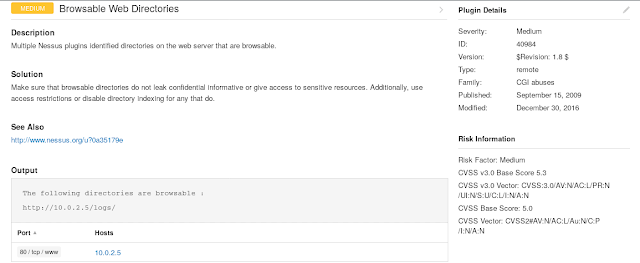

Use vulnerability scanning tools like Nessus to proactively identify and address potential security weaknesses in your cloud environment. Regular scans can help you stay ahead of potential threats. A vulnerability is a weakness in an IT system that can be exploited by an attacker to deliver a successful attack.

6. Data Protection

Backup Data

Regularly back up your data to ensure data availability in case of accidental deletion or cyberattacks. Implement automated backup solutions to minimize data loss.

Encrypt Data at Rest and in Transit

Employ encryption techniques to protect data both at rest and in transit. Encrypting sensitive data adds an extra layer of security, making it unreadable even if intercepted. Data at rest is safely stored on an internal or external storage device. Data in transit, also known as data in motion, is data that is being transferred between locations over a private network or the Internet.

7. Application Security

Web Application Firewall

Deploy a Web Application Firewall (WAF) to safeguard your web applications from common vulnerabilities and attacks. WAFs provide a shield against threats like SQL injection and cross-site scripting. Cross site scripting is a client-side vulnerability that targets other application users, while SQL injection is a server-side vulnerability that targets the application's database.

No Hard Coded Passwords in Code

Developers should never hard code passwords or other sensitive credentials in application code. Instead, use secure credential storage methods and secret management tools. Hardcoded passwords, also often referred to as embedded credentials, are plain text passwords or other secrets in source code.

8. Incident Response

Tabletop Exercises

Conduct tabletop exercises to simulate security incidents and practice your incident response procedures. This helps ensure that your team is well-prepared to respond effectively in the event of a security breach. A tabletop exercise is a security incident preparedness activity, taking participants through the process of dealing with a simulated incident scenario and providing hands-on training for participants that can then highlight flaws in incident response planning.

Find Root Cause

After an incident occurs, it's essential to conduct a thorough post-incident analysis to determine the root cause. Identifying the underlying issue enables you to implement measures to prevent similar incidents in the future. It's not always possible to find the root cause of a cyber incident. In many cases, the cause of an incident is difficult to identify.

Conclusion

Cloud security is a multifaceted discipline that requires a proactive and comprehensive approach. By following these foundational principles of security governance, security assurance, IAM, threat detection, vulnerability management, data protection, application security, and incident response, you can strengthen the security of your cloud environment. As cloud technologies continue to evolve, adapting and enhancing your security practices is crucial to staying ahead of emerging threats and maintaining the integrity of your organization's data and operations.

To learn more about cloud security check out these resources below:

https://docs.aws.amazon.com/wellarchitected/latest/security-pillar/welcome.html

https://cloudsecurityalliance.org/

https://learn.microsoft.com/en-us/azure/well-architected/security/overview

Cloud Bootcamp: DevOps

I'm excited to put my newfound knowledge into practice and continue exploring the ever-evolving world of cloud engineering and DevOps. Together, let's embrace automation, enhance efficiency, and deliver remarkable software solutions!

Terraform

What is Infrastructure as Code? Infrastructure as code (IaC) tools allow you to manage infrastructure with configuration files rather than through a graphical user interface. IaC allows you to build, change, and manage your infrastructure safely, consistently, and repeatedly by defining resource configurations that you can version, reuse, and share. Terraform is HashiCorp's infrastructure as code tool. It lets you define resources and infrastructure in human-readable, declarative configuration files and manages your infrastructure's lifecycle. Using Terraform has several advantages over manually managing your infrastructure

The steps below will get you started with Terraform.

Install Terraform

Install Chocolately first

Then run this command choco install terraform

Verify the installation: terraform -help

Build Infrastructure(EC2)

Create a folder for terraform on your desktop

Create a text file inside your terraform folder called main.tf

Add your code to the main.tf file and save it

Initialize the directory: terraform init

Format the configuration: terraform fmt

Validate the configuration: terraform validate

Create infrastructure: terraform apply

Inspect state: terraform show

Change Infrastructure

Edit your main.tf file

Apply changes: terraform apply

Destroy Infrastructure

terraform destroy

Define Input Variables

Edit the name in the main.tf file

Create a variables.tf file with the new name

Update the instances name: terraform apply -var "instance_name=YetAnotherName"

Query Data with Outputs

Create an outputs.tf file

Apply new configuration: terraform apply

Query the output: terraform output

Create IAM Policies with Terraform

Clone the example repository

Clone the Create IAM policies with Terraform repository

Review the IAM policy resource

Refactor your policy

Create a policy attachment

Create your user, bucket, and policy

Test the policy

I was able to create the policy successfully.

This tutorial focuses on creating IAM policies using Terraform. IAM policies are used to assign explicit permissions to IAM identities (users, groups, or roles) for accessing AWS resources. The tutorial highlights the advantages of managing IAM policies with Terraform and provides step-by-step instructions to create an IAM user, an S3 bucket, and an IAM policy.

Here's a summary of the tutorial steps:

Prerequisites: Ensure that you have Terraform v1.2+ installed, a Terraform Cloud account, AWS CLI, IAM administrative permissions, and AWS credentials configured in Terraform Cloud.

Clone the example repository: Clone the repository containing the example code for creating IAM policies with Terraform.

Review the IAM policy resource: Open the main.tf file and review the IAM policy resource, S3 bucket, and IAM user configurations. The IAM policy resource defines the policy privileges using a JSON document.

Refactor the policy: Refactor the policy by using the aws_iam_policy_document data source, which generates a JSON representation of the IAM policy document. This approach offers flexibility, reusability, and automatic JSON formatting.

Create a policy attachment: Add a policy attachment resource to apply the policy to the IAM user. This step ensures that the policy is applied to the desired users or roles.

Apply the configuration: Initialize the Terraform configuration, apply the changes, and create the IAM user, S3 bucket, and policy.

Test the policy: Use the AWS Policy Simulator to test the policy's effectiveness. Verify that the user is denied actions like deleting objects or buckets in the S3 service but allowed to perform actions on the specific bucket created in the configuration.

Clean up: Destroy the infrastructure created in the tutorial using the terraform destroy command. If using Terraform Cloud, delete the workspace associated with the tutorial.

By following this tutorial, you can learn how to create and manage IAM policies using Terraform, ensuring granular control over access to your AWS resources.

Manage AWS auto-scaling groups with Terraform

Clone example repository

Review configuration

Security groups

Apply configuration

Scale instances

Use the AWS CLI to scale the number of instances in your ASG.

Set lifecycle rule

Add scaling policy

Destroy configuration

This tutorial focuses on managing AWS Auto Scaling Groups (ASGs) using Terraform. ASGs allow you to scale and manage a collection of EC2 instances with the same configuration. Terraform is a tool for provisioning and managing infrastructure resources, and it supports the dynamic aspects of ASGs.

The tutorial covers the following steps:

Prerequisites: You need to have Terraform v1.1+ installed, an AWS account with Terraform credentials configured, and the AWS CLI.

Clone the example repository: Clone the repository that contains the Terraform configuration for creating an ASG.

Review the configuration: Open the main.tf file to review the configuration. It includes definitions for an EC2 Launch Configuration, an Auto Scaling Group, load balancer resources, and security groups.

Apply the configuration: Initialize your configuration with terraform init and then apply the configuration with terraform apply. This will create the VPC, networking resources, Auto Scaling group, launch configuration, load balancer, and target group.

Test the application: Use cURL to send a request to the load balancer endpoint and verify that the application is running.

Scale instances: Use the AWS CLI to scale the number of instances in your ASG. For example, you can use the aws autoscaling set-desired-capacity command to increase the desired capacity.

Set a lifecycle rule: To prevent Terraform from scaling instances when it changes other aspects of the configuration, add a lifecycle argument to the aws_autoscaling_group resource block. This rule ignores changes to the desired capacity and target groups.

By following this tutorial, you will learn how to provision and manage an Auto Scaling group using Terraform, configure scaling policies, and integrate it with other AWS resources such as load balancers.

Docker

Creating a Docker container in Terraform

Install docker

Make sure docker is running

mkdir learn-terraform-docker-container

cd learn-terraform-docker-container

create main.tf file

terraform init

terraform apply

I had no issues. Creating a docker container with terraform.

8. Terraform destroy

This is how the Windows Docker desktop application looks.

How do I run a container

Clone the repository at https://github.com/docker/welcome-to-docker.

Open the sample application in your IDE. Note that it already has a Dockerfile. For your own projects you need to create this yourself.

Build your first image: docker build -t welcome-to-docker /path/to/dockerfile-directory

Run your container from Docker desktop

Stop the container

Message you get when making your first docker container.

Docker basics

What is Docker?

Virtualization software

Makes developing and deploying applications much easier

Packages application with all the necessary dependencies, configuration, system tools and runtime

Problems Docker solves

No configurations needed on the server

Virtual machine vs Docker

Containers take seconds to start vs VMs take minutes to start

Docker images are a couple of MB vs VM images that are a couple of GB

Docker Images vs Containers

Docker containers are the live, running instances of Docker images. While Docker images are read-only files, containers are life, ephemeral, executable content.

Docker Registries

A storage and distribution system for Docker images

Docker Image Versions

Docker images are versioned and different versions are identified by tags

§ Docker run command- creates a new container

GitHub actions

Terraform to Github:

Setup Terraform Cloud

Setup a Github repository

Review Actions workflows

Create pull requests

Review and merge pull request

Verify EC2 instance provisioned

This tutorial provides instructions on automating Terraform workflows using GitHub Actions and Terraform Cloud. Here is a summary of the steps involved:

Introduction: GitHub Actions is introduced as a tool for automating software builds, tests, and deployments, while Terraform is described as a tool for managing infrastructure as code.

Prerequisites: The tutorial assumes familiarity with Terraform and Terraform Cloud workflows and requires a GitHub account, Terraform Cloud account, and AWS account.

Set up Terraform Cloud: Create a new Terraform Cloud workspace, add AWS credentials as environment variables, and generate a Terraform Cloud user API token.

Set up a GitHub repository: Fork the Learn Terraform GitHub Actions template repository, set up repository secrets, and clone the repository to your local machine.

Review Actions workflows: Review the provided workflows for Terraform plan and Terraform apply.

Terraform plan workflow: Configure the workflow to run on pull requests, define environment variables, and set up steps for checking out the repository, uploading the configuration to Terraform Cloud, creating a speculative plan run, retrieving the plan output, and updating the pull request with the plan information.

Terraform apply workflow: Configure the workflow to run on pushes to the main branch, define environment variables, and set up steps for checking out the repository, uploading the configuration to Terraform Cloud, and creating and applying an apply run.

Create pull request: Create a new branch, commit the organization name changes, and push the changes to trigger the Terraform plan workflow.

Review and merge pull request: Review the pull request and merge it, triggering the Terraform plan workflow. View the speculative plan in Terraform Cloud.

Verify EC2 instance provisioned: After merging the pull request, go to GitHub Actions, select the Terraform Apply workflow, and wait for it to complete. Click the link to view the run in Terraform Cloud and verify that the EC2 instance is provisioned.

Destroy resources: To clean up, queue a destroy plan and apply it in Terraform Cloud, then delete the workspace.

By following these steps, you can automate the deployment of a publicly accessible web server using Terraform, GitHub Actions, and Terraform Cloud.

Github Actions basics

What is Github Actions?

Platform to automate developer workflows

CI/CD is one of the many workflows

Developer workflow

Add new contributors

Pull requests are created

Review pull request

Is the bug fixed?

Merge to master branch

Prepare release notes

Update version number

CI/CD pipeline: Merged code>>Test>>Build>>Development

Automate as much as possible

o Basic Github Actions

Most common workflow: Test>>Build>>Push>>Deploy

o Syntax of Wokflow

Name

On

Jobs

uses

o Github Action Runner

Runners are the machines that execute jobs in a GitHub Actions workflow. For example, a runner can clone your repository locally, install testing software, and then run commands that evaluate your code. GitHub provides runners that you can use to run your jobs, or you can host your own runners.

Conclusion: Here is a resource to learn more about DevOps. https://www.youtube.com/watch?v=0yWAtQ6wYNM&list=PLy7NrYWoggjwV7qC4kmgbgtFBsqkrsefG&index=1&t=726s

Cloud Bootcamp: Cloud Project

You cant leave out learning more about a cloud provider when learning cloud engineering. I stuck with AWS since it is what I know best. Cloud computing is the future is it best to learn it now. More and more companies will be adopting cloud infrastructure. -

What is cloud computing? Cloud computing is the on-demand delivery of IT resources over the Internet with pay-as-you-go pricing. Instead of buying, owning, and maintaining physical data centers and servers, you can access technology services, such as computing power, storage, and databases, on an as-needed basis

- What are the big 3 cloud providers? Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP)

Serverless Sending Application:

Manage email and SMS sendings using Step Functions;

Create and send an email with AWS using SES and Lambda;

Lambda: AWS Lambda is a serverless, event-driven compute service that lets you run code for virtually any type of application or backend service without provisioning or managing servers. You can trigger Lambda from over 200 AWS services and software as a service (SaaS) applications, and only pay for what you use. Lambda's strength lies in its ability to interact with other AWS services. It is possible to make instructions such as "send the received data to SQS" or "change the format of an image uploaded to S3" using Lambda. In order for a Lambda function to interact with a service, the function must have what is called a Lambda Role. The Lambda Role contains the permissions of our Lambda function, such as the permission to use all actions of the SNS service for example.

To interact with AWS services like SES, SNS, and Step Functions, a Lambda Role needs to be created with execution rights for these services. Here is a summary of the steps to create the Lambda Role

Go to the IAM (Identity and Access Management) console and select "Roles".

Click on "Create Role" to start creating a new role.

Choose "AWS Service" as the trusted entity type and select "Lambda" as the use case.

Add permissions to the role by searching for the service names (SES, SNS, and Step Functions) and selecting "Full Access" to grant all necessary permissions.

Note: It is recommended to refine the permissions by specifying only the necessary rights for the actions you intend to perform with the services.

Validate and create the Lambda Role with a desired name.

Once the Lambda Role is created, you can proceed to create the first Lambda function, "email.py".

SES- an email platform that provides an easy, cost-effective way for you to send and receive email using your own email addresses and domains

Before using SES, ensure that the sender's and recipient's email addresses are verified in SES.

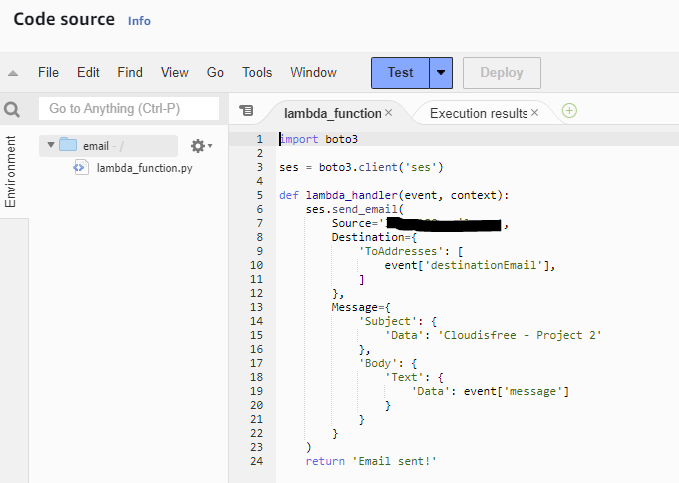

Create a Lambda function called "email" using Python 3.9.

Import the boto3 library to interact with AWS services.

Initialize the SES client using boto3.client('ses').

Define the lambda_handler function, which receives the event and context arguments.

Use the SES send_email function to send an email. Specify the source, destination, and message fields.

Retrieve the destination email address and message from the event argument instead of hard-coding them.

Add a return statement at the end of the function.

Deploy and test the Lambda function by configuring a test event with the destination email address and message.

Verify that the email is successfully sent and received.

Step function- a visual workflow service that helps developers use AWS services to build distributed applications, automate processes, orchestrate microservices, and create data and machine learning (ML) pipelines

I couldn’t verify my phone number so I was unable to get SMS to work.

Step Functions is a low-code, visual workflow service provided by AWS. It allows developers to build distributed applications, automate IT and business processes, and create data and machine learning pipelines using AWS services. To manage how to send messages using Step Functions, follow these steps:

Access the Step Functions console and select "State machines".

Click on "Create state machine".

Choose the option to "Write your workflow in code".

Select the "Standard" type for the state machine, as it is eligible for the Free Tier.

Define the state machine using JSON code.

Start by adding a comment explaining the functionality of the state machine.

Set the starting point of the state machine using the "StartAt" field.

Create states for handling different types of sending, such as email or SMS.

Use the "Choice" type state to select the appropriate action based on the value of the "typeOfSending" variable.

Define the "Email" and "SMS" states as "Task" types, specifying the ARNs of the corresponding Lambda functions.

Mark the "Email" and "SMS" states as the end states.

Add the ARNs of the Lambda functions to the corresponding states.

Provide a name for the state machine and create it.

Start an execution of the state machine by providing a test input with the required variables.

Verify the successful execution of the state machine by checking the generated workflow graph and confirming the message delivery.

With the completion of the state machine, you can proceed to the next step, which is creating the REST API handler using Lambda.

This resource was very helpful. https://tutorialsdojo.com/aws-cheat-sheets/

Cloud Bootcamp: Python and Git

Learning Python and Git go hand in hand. First, learn Python and then learn git to keep track of any changes made in your code. Python is a great language to learn because it has a great community that you can lean on if you run into issues. Python can be used to write Lambda functions. The cool thing about lambda functions is that they are very short and simple. They work well when you want to do a small task. Git can make big coding projects easier to manage. Git is not hard to learn with a little practice.

Python Web scraping project

Pip install requests

Pip install bs4

Get link to github profile image

This code retrieves the profile image URL of a given GitHub user. Here's a breakdown of what each step does:

Import the necessary libraries:

requests: Allows making HTTP requests to retrieve web pages.

BeautifulSoup from bs4: A library for parsing HTML and XML documents.

Prompt the user to input a GitHub username.

Construct the URL of the user's GitHub profile by concatenating the input username to the base URL: 'https://github.com/' + github_user

Send an HTTP GET request to the constructed URL using requests.get(url) and store the response in the variable r.

Create a BeautifulSoup object named soup by parsing the content of the response using 'html.parser'.

Use soup.find() to locate an HTML img element with the attribute alt set to 'Avatar'. The result is stored in the profile_image variable.

Retrieve the value of the src attribute from the profile_image element using ['src'].

Finally, print the profile image URL.

In summary, this code prompts for a GitHub username, fetches the user's GitHub profile page, parses it using BeautifulSoup, and extracts the URL of the profile image.

Here is the code for the web scraping.

This is me inputting my GitHub profile name.

Here are some Python basics so you can create Python scripts too

Variables- A value that can change, depending on conditions or on information passed to the program.

Receiving Input- Python input() function, as the name suggests, takes input from the user

Type Conversion- The process of converting a data type into another data type

String- a collection of alphabets, words, or other characters.

Arithmetic Operators- a mathematical function that performs a calculation on two operands.

Operator Precedence- Python simply refers to the order of operations. Operators are used to perform operations on variables and values

Comparison Operators- compare the values on either side of them and returns a boolean value

Logical Operators- three Boolean operators, or logical operators: and, or , and not

If Statements- tells the Python interpreter to 'conditionally' execute a particular block of code

While Loops- run a piece of code while a condition is True. It will keep executing the desired set of code statements until that condition is no longer True

Lists- a data structure in Python that is a mutable, or changeable, ordered sequence of elements

For loops- The for loop in Python is an iterating function. If you have a sequence object like a list, you can use the for loop to iterate over the items contained within the list.

The range() Function- creates a collection of numbers on the fly, like 0, 1, 2, 3, 4. This is very useful since the numbers can be used to index into collections such as string. The range() function can be called in a few different ways

Tuples- used to store multiple items in a single variable

Git Basics

Git- a distributed version control system that tracks changes in any set of computer files, usually used for coordinating work among programmers collaboratively developing source code during software development

Github- It provides the distributed version control of Git plus access control, bug tracking, software feature requests, task management, continuous integration, and wikis for every project

Git Repository- the .git/ folder inside a project. This repository tracks all changes made to files in your project, building history over time. Meaning, if you delete the .git/ folder, then you delete your project’s history.

Branch- branches are a part of your everyday development process. Git branches are effectively a pointer to a snapshot of your changes. When you want to add a new feature or fix a bug—no matter how big or how small—you spawn a new branch to encapsulate your changes

Fork- a new repository that shares code and visibility settings with the original “upstream” repository. Forks are often used to iterate on ideas or changes before they are proposed back to the upstream repository, such as in open source projects or when a user does not have write access to the upstream repository

Upstream- upstream refers to the original repo or a branch. For example, when you clone from Github, the remote Github repo is upstream for the cloned local copy

Pull request- let you tell others about changes you've pushed to a branch in a repository on GitHub. Once a pull request is opened, you can discuss and review the potential changes with collaborators and add follow-up commits before your changes are merged into the base branch.

Git stash- git stash temporarily shelves (or stashes) changes you've made to your working copy so you can work on something else, and then come back and re-apply them later on

Screenshot of me running git status

Screenshot of me running a git commit

Thanks for reading this blog post to the end. Useful links are below:

Cloud Bootcamp: Bash and Networking

Knowing bash scripting and networking are essential to learning more about cloud engineering. I started learning the fundamentals of how networking works in AWS and bash scripting. Mastering both skills will help me understand engineering more.

Bash

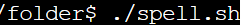

I created a script that asks a user for a folder and spell-checks every file in that folder.

Create text documents with misspelled words

Download aspell. Sudo apt-get install aspell

Start your script like this- #! /bin/bash

for f in *.txt; do aspell check $f ; done

This is a Bash script that performs a spell check using the aspell tool on all files with a ".txt" extension in the current directory.

The script starts with the shebang line "#! /bin/bash", which specifies that the script should be run using the Bash shell.The "for" loop iterates over all files in the current directory that end with ".txt", assigning each file to the variable "f" in turn. The body of the loop then calls the "aspell" command with the "check" option, passing in the filename stored in the "f" variable. This will perform a spell check on each file and print out any misspelled words to the console. So, overall, this script is a simple way to spell-check all text files in the current directory using the "aspell" command-line tool.

Here are some basic Linux and bash commands so you can create bash scripts too.

Help- help and man.

Navigate-pwd, cd, pushd, and popd.

List content- ls.

Find files- whereis, which, and find.

Directories- mkdir, touch, mv, rm, cp, and rmdir.

. View file contents- cat, head, tail, more, less, and grep.

Environment variables- env, and export.

Modify permissions- chown, chgrp, and chmod.

What are variables in bash? Taking you back to math class a variable is the value you give to an expression.

Hello_message=’Hello World!’

echo $hello_message

How to assign the output of a command to available in Bash Ex. Current_dir=$(pwd)

You must use double quotes “”

Constant: read-only variable_wont_change=”blue”

Conditional statements- let you write code that performs different tasks based on specified checks.

Case statements- The case statement simplifies complex conditions with multiple different choices.

Functions- a method used in shell scripts to group reusable code blocks.

Loops- a bash programming language statement which allows code to be repeatedly executed.

Use breaks

Different types of loops while, until and for

How to write a bash script.

Create a new file, hello.sh and open it with nano.

On the first line specify the interpreter to be used in the code. In this case it is Bash.The first part of the line, #!, is called the “shebang” and it indicates the start of a script.

Save the code by pressing CTRL + X, then press Y and Enter.

AWS Networking

AWS networking basics

CIDR- Classless Inter-Domain Routing (CIDR) is an IP address allocation method that improves data routing efficiency on the internet.

Subnets- a range of IP addresses in your VPC.

Internet gateway- a horizontally scaled, redundant, and highly available VPC component that allows communication between your VPC and the internet.

Route tables- contain a set of rules, called routes, that determine where network traffic from your subnet or gateway is directed.

Nat gateway- a highly available AWS-managed service that makes it easy to connect to the Internet from instances within a private subnet in an Amazon Virtual Private Cloud (Amazon VPC).

NACL- An optional layer of security that acts as a firewall for controlling traffic in and out of a subnet.

Security group- acts as a virtual firewall for your EC2 instances to control incoming and outgoing traffic.

API Gateway- helps it simple to create, publish, maintain, monitor, and secure APIs at scale.

CloudFront- delivers content faster including data, videos, applications, and APIs.

Route 53- Changes IP addresses to domain names and the other way around so computers can be connected with each other. For example, 192.0.7.1 into www.forexample.com.

VPC- gives you an isolated section of the AWS cloud.

App Mesh- monitors and controls microservices.

Cloud Map- With AWS Cloud Map, you can define custom names for your application resources, and it maintains the updated location of these dynamically changing resources.

Direct Connect- makes it easy to establish a dedicated network connection from your premises to AWS.

Global Accelerator- a networking service that improves the availability and performance of the applications that you offer to your global users.

Privatelink- simplifies the security of data shared with cloud-based applications by eliminating the exposure of data to the public Internet.

Private 5G- an easy way to use cellular technology to augment your current network.

Transit Gateway- a service that enables customers to connect their Amazon Virtual Private Clouds (VPCs) and their on-premises networks to a single gateway.

VPN- solutions establish secure connections between your on-premises networks, remote offices, client devices, and the AWS global network.

Elastic Load Balancing- automatically distributes incoming application traffic across multiple targets, such as Amazon EC2 instances, containers, and IP addresses.

Integrated Private Wireless on AWS- designed to provide enterprises with managed and validated private wireless offerings from leading Communications Service Providers.

VPN architecture breakdown

VPC- the VPC can be used to host the VPN gateway and the private subnet.

Security Group- the security group can be used to control access to the VPN gateway.

Private subnet- private subnet can be used to host the resources that need to be accessed via the VPN.

Route table- a separate route table can be created for the private subnet that routes traffic through the VPN gateway.

VPN gateway- used to establish the VPN connection to the customer gateway.

VPN connection- VPN connection can be established over the internet.

Customer gateway- the customer gateway can be configured to establish the VPN connection to the VPN gateway hosted in the VPC.

Why would you use this diagram? This architecture can provide a secure and reliable way for customers to access their resources hosted in AWS using a VPN connection.

Secure web application breakdown

VPC- the VPC can be used to host the public subnet.

Public subnet- the public subnet can be used to host the web application.

Security group- the security group can be used to control access to the web application.

Route table- a separate route table can be created for the public subnet that routes traffic through the internet gateway.

Internet gateway- the internet gateway can be used to enable internet access to the web application.

Why would you use this diagram? - This architecture can provide a scalable and secure way for customers to host their web applications on AWS in a public subnet, which can be accessed over the internet. The security group can be used to control access to the web application, and the route table and internet gateway can be used to enable internet access to the web application.

I hope you enjoyed this blog post. Please see the two links below to learn more about AWS networking and bash scripting.

THINGS I LEARNED FROM THE AWS CERTIFIED Security Speciality CERTIFICATION

Cloud security is a set of methods and technology designed to focus on external and internal threats to business security. Organizations need cloud security and use cloud-based services daily as they move toward the future. I pursued this certification because I wanted to learn more about cloud security from an AWS perspective. This exam challenged my knowledge of cloud security in new ways.

THINGS I LEARNED FROM THE AWS CERTIFIED Security Speciality CERTIFICATION

1. The difference between IAM policies and Service control policies.

Actions from SCP affect all IAM identities, including the member account's root user. You can use SCPs to allow or deny access to AWS services for individual AWS accounts with AWS Organization. IAM policies allow or deny access to AWS services or API actions that work with IAM. An IAM policy can be applied only to IAM identities (users, groups, or roles). IAM policies can't restrict the AWS account root user.

2. How cloudtrial and cloudwatch work together.

CloudTrail integrates with the CloudWatch service to publish the API calls being made to resources or services in the AWS accounts. The published event has invaluable information that can be used for compliance, auditing, and governance of your AWS accounts. Then in cloudwatch the cloudtrail logs can be monitored.

3. When AWS WAF vs when to use AWS Shield.

You can use AWS WAF, and AWS Shield together to create a complete security solution. AWS WAF is a web application firewall that can be used to block SQL injection attacks and cross-site scripting attacks. AWS Shield provides protection against distributed denial of service (DDoS) attacks for AWS resources, at the network and transport layers (layer 3 and 4) and the application layer (layer 7).

4. The difference GuardDuty and Inspector.

Amazon Inspector provides you with security assessments of your applications settings and configurations on your EC2 instances while Amazon GuardDuty helps with analyzing your entire AWS environment for potential threats.

5. The difference between AWS secrets manager and AWS systems manager parameter store.

The next point of difference is the ability to rotate the secret. AWS Secrets Manager offers the ability to switch secrets at any given time and can be configured to regularly rotate depending on your requirements. One advantage of the SSM Parameter is that it costs nothing to use it. You can store up to 10,000 parameters without getting billed.

6. How CloudFront can work with Certificate Manager.

To use a certificate in AWS Certificate Manager (ACM) to require HTTPS between viewers and CloudFront, make sure you request (or import) the certificate in the US East (N. Virginia) Region (us-east-1). If you want to require HTTPS between CloudFront and your origin, and you’re using a load balancer in Elastic Load Balancing as your origin, you can request or import the certificate in any AWS Region.

7. The difference between Cognito user pools from identity pools.

User pools are for authentication (identity verification). With a user pool, your app users can sign in through the user pool or federate through a third-party identity provider (IdP). Identity pools give users access to AWS resources, such as an Amazon Simple Storage Service (Amazon S3) bucket or an Amazon DynamoDB table.

Resources used to pass this certification:

1. A Cloud Guru

2. AWS skill builder courses and practice test

- AWS Certified Security - Specialty Official Practice Question Set

- Exam Readiness: AWS Certified Security – Specialty

- AWS Security Fundamentals (Second Edition)

3. Tutorial dojo practice tests and cheat sheet

- AWS Certified Security Specialty Practice Exams 2023

- AWS Certified Security – Specialty Exam Guide Study Path SCS-c01

4. Whizlabs labs and practice tests

5. FAQs located on the official AWS Certified Security – Specialty page.

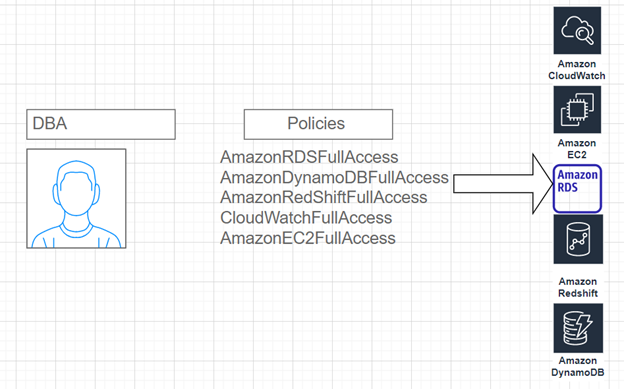

AWS IAM Errors

In this mini-project, I act as a system administrator who needs to up database administrators with the proper access permissions. To provide access and make sure the appropriate security measures are in place, I will use AWS Identity & Access Management (IAM) and attach the necessary AWS-managed policy that allows full access to Amazon Relational Database Service (RDS), DynamoDB, and RedShift.

What is IAM?

AWS Identity and Access Management (IAM) is a web service that helps you securely control access to AWS resources. With IAM, you can centrally control permissions that control which AWS resources users can gain access to. You use IAM to control who is authenticated (signed in) and authorized (has permissions) to use resources.

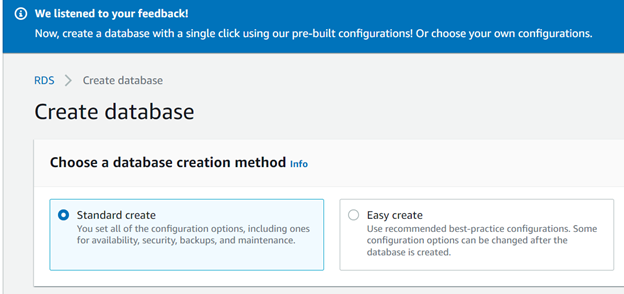

What is RDS?

Amazon Relational Database Service is a distributed relational database service by Amazon Web Services. It is a web service running "in the cloud" designed to make it easy to use a database.

What is RedShift?

Amazon Redshift is a data warehouse (primarily used for analytics) product and can handle large-scale amounts of data.

What is Dynamodb?